Should We Be Afraid Of Deepfakes Or Be Hopeful?

Deepfakes, powered by AI and ML, produce high-quality fake images and videos but should we be afraid of this technology? A few deepfake recordings have turned into a web sensation, giving millions around the planet their first taste of this new innovation.

Deepfakes, powered by AI and ML, produce high-quality fake images and videos but should we be afraid of this technology?

The technology was developed by a computer scientist Ian Goodfellow while working on generative adversarial networks (GANs). Now GANs are too strong to recreate a person from a five-second video clip or an image of the same person. The term of deepfake was coined in 2017 and has become more and more accurate as time goes. The technology was previously used to make celebrity deepfake pornographic content. Further, people used it to spread fake news, malicious content, and hoax footage of famous individuals with completed altered negotiations.

A few deepfake recordings have turned into a web sensation as of late, giving millions all throughout the planet their first taste of this new innovation: President Obama utilizing an exclamation to portray President Trump, Mark Zuckerberg conceding that Facebook's actual objective is to control and abuse its users. These videos are examples of Deepfake.

However, it's not a new theme as the field of computer science is in use since the 1990s. Obama's Deepfake was published in 2017 that shows the capability of this technology, and amazingly, the significant work in this field was done by amateurs.

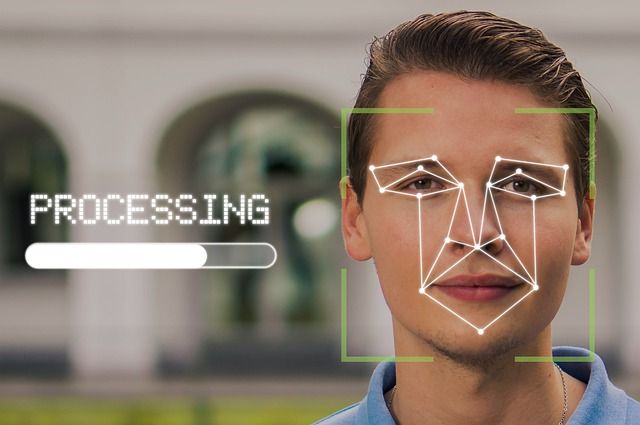

WHAT IS A deepfake?

The word is the combination of two terms: "deep learning" and "fake." A neural network algorithm is a kind of deep learning trained on extensive data for replicating the patterns. On the other hand, machine learning computing power efficiently creates amazing fake videos, audios, and photos. These look original that the audience cannot differentiate between the real and fake ones. Deeptrace shared that approximately 7964 online deep videos are recorded at the beginning of 2019 that raised to 14678 after nine months. Further, Sensity reveals that since 2019, 49081 deepfake videos are uploaded with an increase of 330% in popularity.

HOW TO CREATE A DEEPFAKE?

The leading technology behind deepfake generation is the combination of the generative adversarial network (GANs) with autoencoders.

- GAN is an AI type that comes with two parts: creating a deepfake while the other checks how original it looks? Plus, the system learns from its past mistakes and avoids them in the second run and so on. GANs are comprised of two neural nets; one is a generator while the other is a discriminator. There is a constant fight among to compete, so it helps the machine learn quickly. The generator involves in creating a deep image while the discriminator tries best to identify if it’s a deepfake or not. If the discriminator fails to identify the generator's fake work, it uses collected information to judge better. On the other hand, if it determines the content is fake, the second net produces a more accurate fake image than before; it’s a long-running process.

- Autoencoders are neural networks that learn image features first and then decode them for changing the image.

IS IT SOMETHING TO BE WORRIED ABOUT IN THE FUTURE?

People with evil intentions take advantage of this new technology and use it to hurt innocent people. Deepfakes and GAN earned more popularity than engineering and research topics.

Does this concept terrify you? Deepfakes are easier to create and quicker to be spread in a legislative vacuum. It's time to think about it seriously, as previously, people use this technology to make pornographic content of famous actors. It is further used to blame someone for a crime he didn’t commit or influence people during an election. Another significant issue is the fast-spreading rate of news in this present social media era. Twitter’s study showed that people spread fake news faster than real one. Moreover, people who commit hostile acts may deny their acts and blame deepfakes for proving their fake innocence.

There are also benefits of deepfakes; here is the list of pros and cons of technology, let's discuss them all one by one.

DEEPFAKE: CONS OF AI

Deepfakes can quickly fabricate media via lip-syncing, face swapping, and puppeteer without any agreement. It, in return, influences personal security, business disruption, and political stability badly. Evil forces also use it to end the reputations of respected families and fraud audiences via fake evidence. However, the situation has reached an alarming state in the last two years due to malicious content generation via AI. The technology harms individuals and declines trust in the media as anyone can generate a fake video and claim it's real, and anyone can say a real video is fake. People’s immoral acts can be masked in the veil of deepfakes known as liar's dividends.

Non-state actors like terrorists and insurgent groups also use this technology to generate anti-nation sentiment among nations. For instance, an extremist organization may produce a deepfake video showing army disrespecting a religion and ignite the fire of hate among people ultimately. Similarly, a state can spread negativity via a fake video regarding a country or a religion to spread hate and anger against a minority. All this is not tough to attain as even a layman creates deepfakes via free available software and ruins a life.

Here are some factors to consider to check the negative potential of this innovation.

1. TRUST

It's one of the terrifying technology downsides that can potentially make anyone say or do anything. Suppose you see a deepfake of your family partner where he/she is violating ethics, but your partner is innocent. How could you believe that it’s a deepfake? The other case is if your partner broke your trust but denies even you got the evidence. How could you blame your partner or deepfake technology?

The University of Washington researched in 2017 to highlight the pitfalls of generative technology. They released a paper explaining how they made fake content of President Barack Obama. Similarly, Mark Zuckerberg, Google's Chief Executive, was hit in a video showing he’s crediting a secretive agency for their social network success. Deepnudes website was proposed to launch recently to create pornographic content of anyone via fake porn generator by simply superimposing head onto a nude body. However, the idea was ignored, but the technology was released.

2. FINANCIAL SCAMMING

Evil forces use real synthetic audio deepfakes to convince people they are talking to their trusted ones and defraud them. Scammers used the deepfake of a tech company's CEO, asking his employee to transfer money to the scammer's account. Scammers used the same strategy last year to defraud a company for $240,000. This fake technology is also used for blackmailing and nefarious purposes.

However, from DARPA to the FBI, everyone is working to find unique ways to identify deepfakes. The biggest drawback of deepfake is that it corrodes people's trust in the technology; we’ll stop trusting TV news, live streaming events, and even our eyes.

Right now, the GAN technology can only superimpose a face onto another body, but the time is not far when it will start creating a whole new body. Interestingly, the new image or video will look accurate enough to fool any person and break any relationship.

Yet, cons aside, there are some exciting advantages of this technology as discussed below:

DEEPFAKE PROS

This innovative technology comes with infinite opportunities for people despite of their listening, communicating, and speaking domains. This AI-Generated Synthetic Media has as many positive impacts as possible as negative to support bright living. It has clear benefits in education, criminal forensics, film production, digital communication, social media, business, health, and artistic expressions.

1. ART

The followers of Star Wars are well-aware of this technology, where they bring back Peter Cushing for 2016’s Rogue One: A Star Wars Story. Plus, a deepfake allows adding or changing the dialogue in a video without reshooting the required scene. A UK-based charity organization used deepfake technology to create content of David Beckham using nine languages to deliver an anti-malarial message. Moreover, a corporate company WPP created training videos via AI to develop a video presenter reading different languages and addressing them one by one. Moscow’s researchers used the same innovation to bring Mona Lisa back to life by moving her eyes, head, and mouth.

2. FILM INDUSTRY

Technology help is promoting the film industry in several ways; for instance, it improves video quality from amateur work to professional editing. It recreates classic video scenes and regenerates long-dead actors in movies. Digital voices are created with the help of deepfake tech for actors who lost their voices due to illness or any disease.

It also allows people to meet their dead loved ones in moving videos via the "back to life” feature.

3. PROFESSIONAL TRAINING

People used deepfake to create AI Avatars videos for making training videos; for instance, startups like Synthesia, a London-based company, got attention worldwide due to the COVID-19 pandemic as it ends the need for real people for shooting videos.

4. RESEARCH and EDUCATION

Deepfake technology is also used to make personal technology avatars in apps to check how they look after a haircut or buy their favorite clothes. Further, several professions use this technology in their training applications to even work from home.

One of the most prominent fields in medicine where doctors are using generative technology for creating fake brain scans by using actual patient data. Hence, these fake scans help training algorithms to spot tumors in real pictures. Scientists are finding ways to use GANs for abnormality detection in X-rays and their potential to create virtual chemical molecules. It will, in return, boost medical discoveries and material sciences research work.

5. FINANCIAL SAVING

Several industries can introduce deepfake technology for creating advertising and gaming content at a much cheaper rate. It helps saving money and time by reducing the need for meetings with people to understand the concept of their business campaign.

WILL ANY DEVICE BE INVENTED TO SPOT DEEPFAKE?

Adaptive manipulation traces extraction network (AMTEN) involves in the detection of fake faces. It works as a pre-processing module to reduce the adverse effects of picture content. Typically, face manipulation technology works to predict the presence of manipulation traces and extracts these patterns if present. Its working principle base on finding the difference between input and output image; hence, extract the traces quickly in deepfakes. This AMTEN technology is good enough in tamper detection tasks. The AMTEN module is integrated with Convolutional Neural Networks to give rise to the concept of AMTENnet; it’s a Deep Neural Network that involves the detection of Facial Image Manipulation in images and videos.

Recent work to detect either an image is real or fake was done via binary classification technique. Besides binary classification, AMTENnet focuses on multiple classification strategies to detect even minor facial manipulation. Furthermore, Facial Image Manipulation strategies can be explained via three groups:

- Identity Manipulation: It is the way to produce fake faces of entirely imaginary people or replace a face with another through FaceSwap of deepfake technology.

- Expression Manipulation: This strategy transfers source face expressions to a target face.

- Attribute Transfer: This technique works to change the style of face images like changing gender, hair color, or even age.

COUNTERMEASURES

There are always advantages and disadvantages of AI, so we cannot ignore the pros because of the cons of AI. A multimodal and multi-stakeholder approach is an effective way to defend the truth. The principal concept of countermeasures to reduce the negative impact of deepfake should be of two folds; one to lessen the malicious deepfake disclosure while the other is to minimize the damage it can cause. Four broad categories as extraordinary countermeasures that should be followed for malicious deepfakes include platform policies and governance, legislative regulations and actions, medial literacy, and innovational intervention.

There is no ambiguity left regarding deepfakes, as these will circulate in social media faster in the coming years. So, internet companies are trying to develop AI tools for detecting deepfakes. Facebook has banned deepfake photos from its platform. Microsoft has supplied a deepfake detection tool called Microsoft Video Authenticator. As technology grows and becomes robust, fake technology is also evolving and making it difficult to differentiate between real and fake content. The first thing to consider fighting with this technology is to learn how deepfakes work.