Top AI Achievements of 2021

AI has proven to be a game-changer in the last decades. It provides extraordinary advantages and has potential for future success in automation. Some of the top AI achievements of 2021 include facial identification, text generation, speech recognition, drug discovery, and automated translation.

Artificial intelligence technology has exceptional benefits and potential for future automation and prosperity. Its market size is forecasted to reach $266.92 billion with a CAGR (Compound Annual Growth Rate) of 33.2% by 2027. Tech giants have already adopted AI solutions in their systems to improve user experience, engagement, services, and product delivery. Facial detection, text generation, speech recognition, drug discovery, and automated translation feature are some of the AI-worthy achievements in 2021.

According to a 2021 survey, McKinsey reported that AI adoption is rising continually. 56% of all business holders (the number was 50% in 2020) reported adopting artificial intelligence in one of their functions. Recent studies show that companies supporting the economy in China, North Africa, and the Middle East installed artificial intelligence solutions.

Let's have a look at top most amazing achievements of Artificial Intelligence in year 2021.

1. Computer Vision

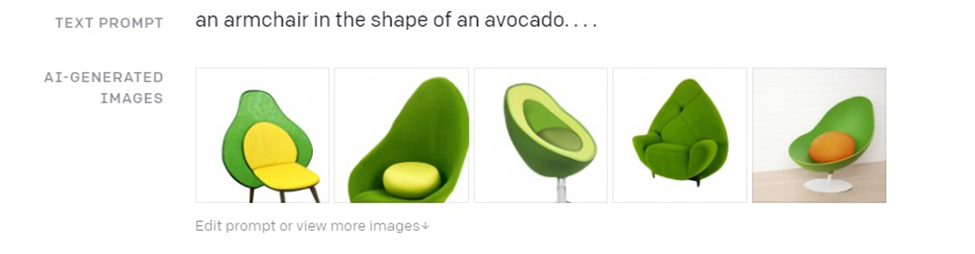

1.1. DALL-E

DALL-E neural network is a breakthrough in computer vision developed by OpenAI in 2021 that involves creating images from text content. It comes with zero-shot performance for generating images of absurd and unclear objects with extraordinary quality.

Interestingly, it is not based on GANs commonly used to train neural networks for image generation, making it an incredibly new approach. It works unlike the previous advancement in the same field as having great knowledge of unseen text content. It produces anthropomorphized versions of various objects, including animals.

Working Strategy

It is trained over 259 million text pairs and images collected from the internet. It took autoregressive transformer parameters of about 12 billion from GPT-3. A sequence of tokens (discrete vocabulary symbols) is used as input, and the model is trained to multiply the chances of sequential token generation. Its training is divided into two broad categories: in the first step, the image is compressed (transformer size is reduced without compromising quality), while the second step involves chain series (256-byte pair encoding of text tokens with image tokens autoregressive transformer training).

Benefit: This neural network controls the number of times an attribute appears on the image and visualizes each aspect in 3D models. Plus, it draws the internal structure of a specific entity which requires perfect knowledge and is not possible without proper training.

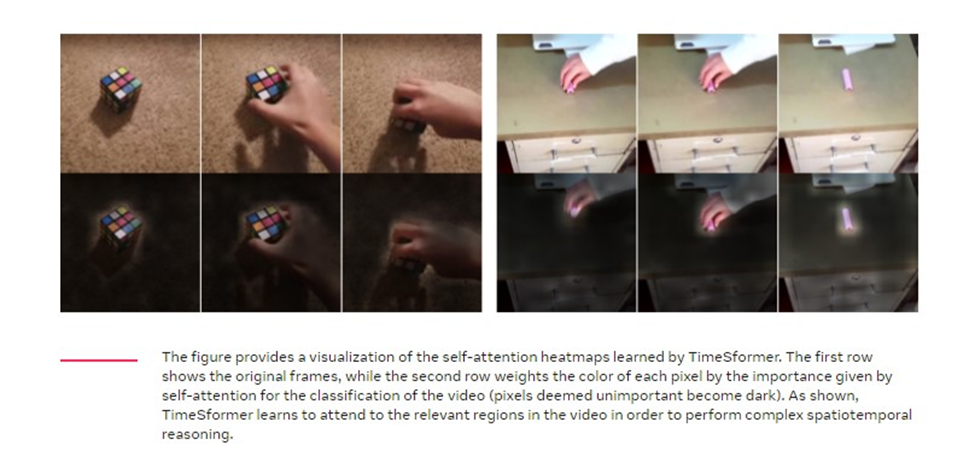

1.2. TimeSformer: New Video Architecture Approach

Time-Space Transformer developed by Facebook AI is an exclusively new technique for video understanding. It shows comparatively higher speeds being the very first architecture based on transformers.

Working Strategy

It splits the video into small non-overlapping patches and avoids exhaustive comparisons among patches via self-attention. It is a big step towards processing comparatively large videos. Currently, available models are suitable for seconds-long videos.

Benefit: The developed architecture is measurable and helpful in creating more accurate models. TimeSformer is based on a self-attention mechanism allowing the model to understand space-time dependencies during the whole video. One of its exciting features is its computational cost-effectiveness.

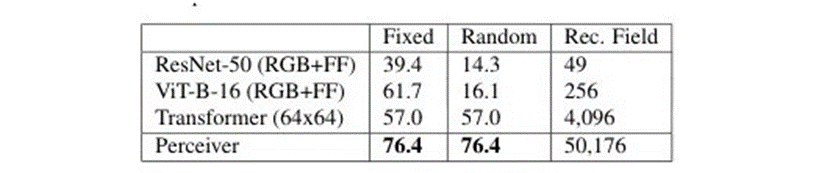

3. Perceiver: Compatible with Multimodal Data

DeepMind developed this transformer-based model that can deal with multimodal data like humans. It follows the human brain principle in receiving, analyzing, and processing data simultaneously in several formats.

Unlike existing models, it deals with multiple types of data. It achieved almost twice the accuracy of the ResNet-50model while sharing image classification results. However, its disadvantage includes overfitting (cannot be ignored in similar big models), but scientists tried to overcome it greatly.

Working Strategy

Perceiver has a cross-attention layer instead of self-attention to overcome time complexity. It converts all inputs of different formats, including audio, image, or sensor data, into bytes, making them instantly available to work with any data. Moreover, Perceiver’s one part deals with actual data while the other looks at the summary and reduces training time exponentially.

Benefit: It is one step closer to real AI applications.

1.4. GSLM: First Audio High-Performance NLP Model

Researchers at Facebook AI claimed to create GSLM (Generative Spoken Language Model), the first extraordinary NLP model entirely based on audio. Various field specialists worked together in developing an innovative model for addressing current NLP systems issues.

Working Strategy

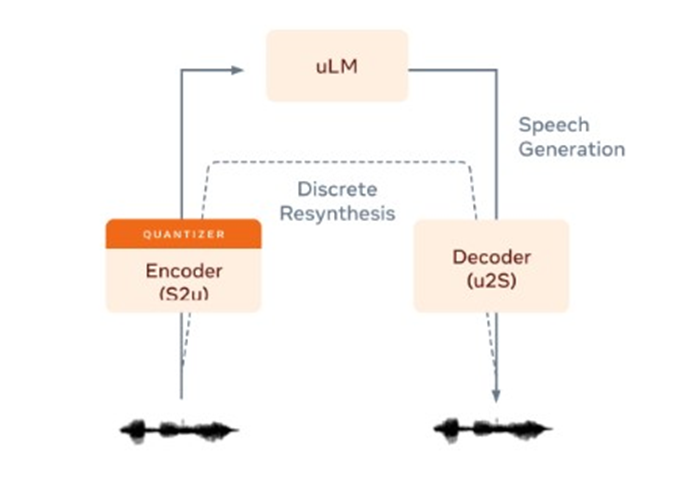

GSLM comprises of three parts:

- An encoder is a speech converter into discrete units (pseudo-text) representing sounds. HuBERT provides the best result for being an encoder.

- Auto-Regressive Language Model: It involves predicting the text units based on the previously seen ones. The researchers used a multi-stream transformer for this purpose with several heads.

- Decoder: It is the last component for converting units to sound signals. A standard speech-to-text system, Tacotron 2, is used here. Researchers trained the system on over 6000 hours of audibles without labels.

The uniqueness of this system is its power to increase the speed and accuracy of NLP by removing ASR systems (automatic speech recognition) from the working pipeline.

Benefit: It opens new ways to understand languages with least or no written texts.

2. Healthcare

2.1. How Disease changes cells? Novel Approach to Find

Researchers use an image analysis pipeline to have a sharp insight into how the disease affects the individuals going deep into cells. TDAExplore takes information from microscopy, paired with topology to see the element arrangement and power of AI to determine cellular changes. Dr. Eric Vitriol said in the journal Patterns:

“It is an accessible, powerful option.”

Working Strategy

It generates quantitative, qualitative, and objective information from X-rays or PET scans images, even via personal computer. Computers mimic brain functioning in analyzing and accessing data. Topology is the perfect solution for image analysis, and the TDAExplore (topological data analysis) helps the computer analyze the base elements. For instance, in this case, actin protein (involved in building blocks of filaments and fibers) was observed changing density. This efficient system didn't require thousands of images to train itself but only up to 25 images are enough.

The computer breaks image patterns in patches enabling better classification and less training that results in extracting useful data earlier. Vitriol first analyzes normal cells to distinguish them from diseased ones. For it, he checked the position of filaments in cells- in the center, close to it, or at the edge and their number. The pattern emergence showed him the presence and arrangement of actin.

Benefit: Majorly, how if it has been modified during a damage or disease condition.

2.2. New Technique to Identify Proteins in the Brain

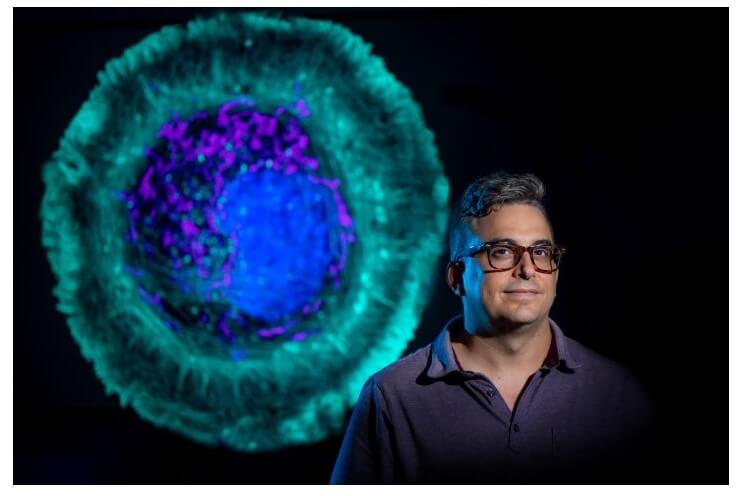

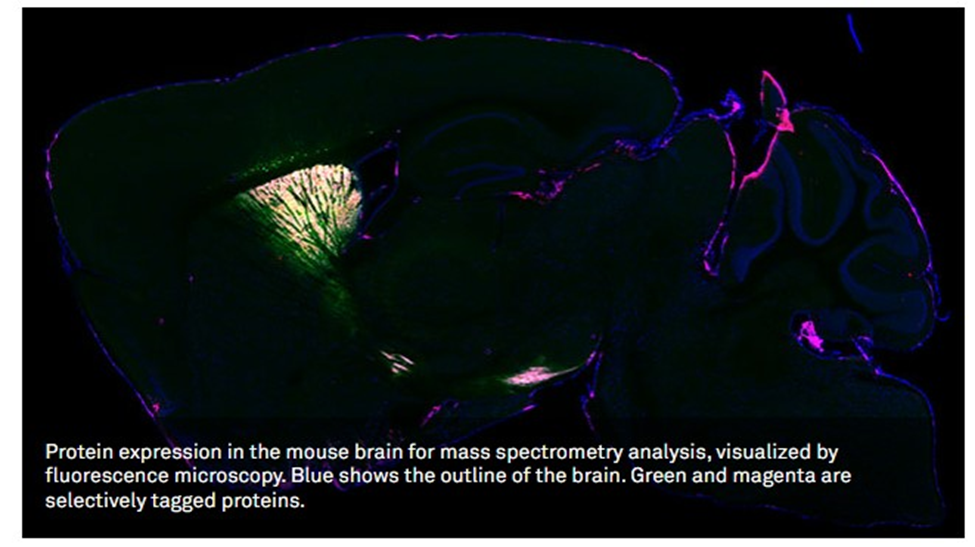

Researchers developed a novel technique to identify proteins in various neurons in the living animal brain.

Working Strategy

Researchers designed a virus capable of sending an enzyme to a specific location in the brain of a living mouse. The enzyme is derived from soybean and effectively locates its neighboring proteins genetically. Then they tested the brain by imaging technology with electron microscopy and fluorescence and found their strategy i2s applicable to the whole proteome in living neurons. The results are finally analyzed with mass spectroscopy.

Kozorovitskiy said:

“The virus essentially acts as a message that we deliver. In this case, the message carried this special soybean enzyme. Then, in a separate message, we sent the green fluorescent protein to show us which neurons were tagged. If the neurons are green, we know the soybean enzyme was expressed in those neurons.”

Benefit: With this technique, researchers found how proteins work under controlled areas and how they respond to others (functionality dependency).

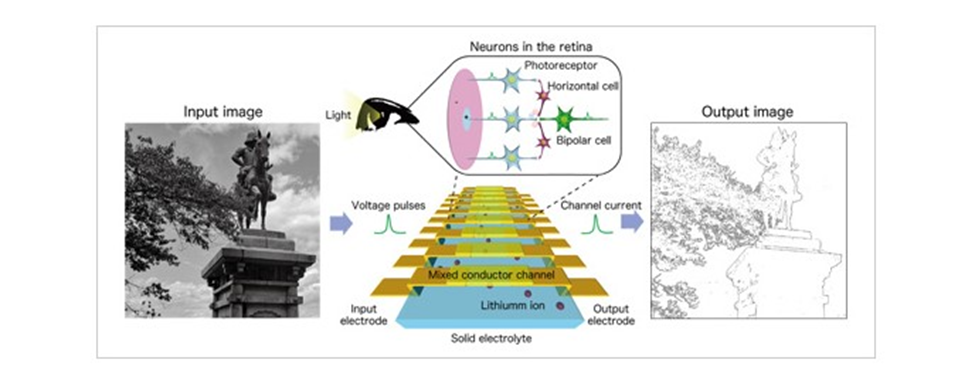

2.3. The First-Ever Artificial Vision Device Mimicking Human Optical

Researchers at NIMS have developed an extraordinary artificial vision device that can contrast between darker and light image areas like humans. This innovation is considered as first system mimicking human vision illusions.

Working Strategy

The device comprises mixed conductor channels placed at regular intervals on a solid electrolyte. It responds to input voltage pulse (equivalent to photoreceptors' electrical signals) mimics how human retinal neurons (bipolar, photoreceptors, and horizontal cells) process visual signals. Consequently, the solid electrolyte's ions start migrating towards a mixed conductor array, which is responsible for changing the output current similar to visual cell response in humans.

Benefit: This achievement will lead to the development of energy-efficient image processing hardware and visual sensing systems that can process analog signals economically. It doesn’t require software and can distinguish different shapes and colors.

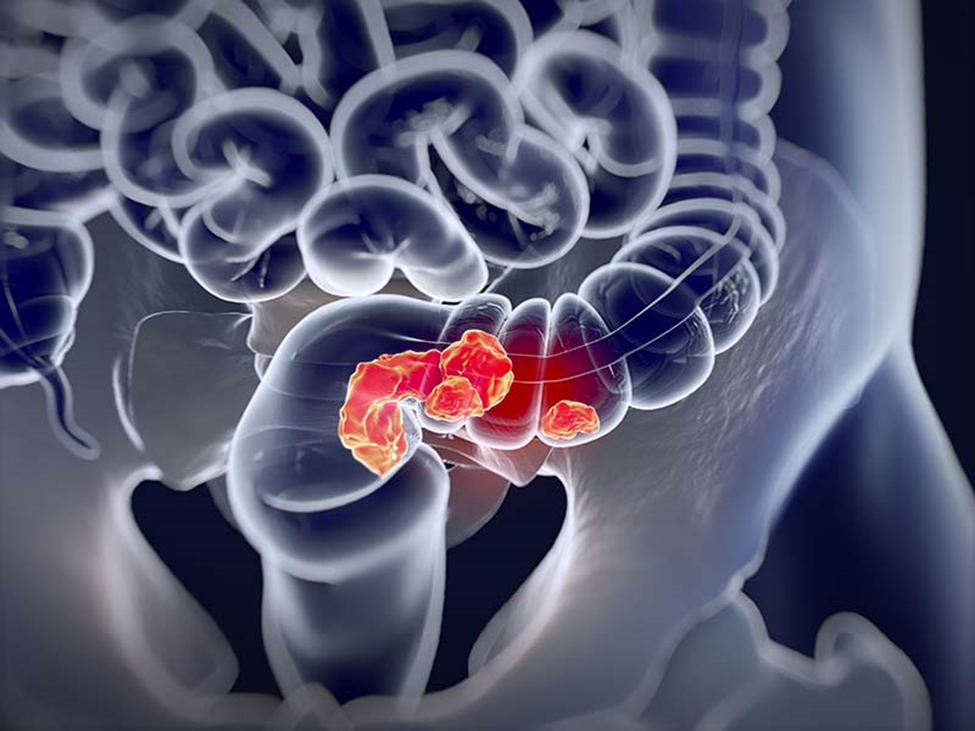

2.4. Artificial intelligence Colorectal Cancer Detection Technique Better than Pathologist: New Study Revealed

This study was published in the Nature Communications journal. The researcher at Tulane University found that AI can detect and diagnose cancer in colorectal tissues by scanning better than an experienced pathologist. They, traditionally, evaluate and label multiple histopathology images regularly to decide on a human detection system if the patient has cancer.

Working Strategy

Deng and his team members collected over 13000 images of colorectal cancer from 8803 subjects and over 13 cancer centers in China, the United States, and Germany. The technicians randomly selected these images and developed a pathological recognition program assisted by a machine.

Benefits: The system allows the computer to detect cancer in the images if present as it is one of the leading death causes in America and Europe. After comparing its result with the results generated by a highly experienced pathologist, the average score for a machine-assisted system was .98. The researcher is hopeful that many pathologists will prefer his system in pre-screening cancer in the future for a quick decision.

2.5. Premature Baby Face Software Detector Developed for Monitoring Heart and Breathing Rate

Facial recognition in adults is common but new in detecting a premature baby’s face with software. They can monitor the baby's heart and breathing rate beating ECGs and outperforming them.

Working Strategy

The researchers used a set of babies’ videos in NICU to detect premature faces and skin tones. Its vital signs are like ECG (electrocardiogram), excelling in its functioning as a non-contacting monitoring technique.

Neonatal care specialists from UniSA and engineer researchers monitor seven infants with this technology at NICU (Neonatal Intensive Care Unit) at a medical center in Adelaide. The professor at UniSA, Javaan Chahl said:

“Babies in neonatal intensive care can be extra difficult for computers to recognize because their faces and bodies are obscured by tubes and other medical equipment. Many premature babies are being treated with phototherapy for jaundice, so they are under bright blue lights, making it challenging for computer vision systems.”

Benefits: It is the first technology developed in neonatal ward use that helps avoid skin tearing and resultant infections.

3. SOCIAL MEDIA

3.1. New Creative Technique for Real-Time Song Lyric Generation

Waterloo researchers from the University’s Natural Language Processing Lab developed an exceptional technology that will ultimately inspire music artists in writing and finalizing their songs. They developed a real-time system, LyricJam, based on artificial intelligence that responds to live instrumental music and generates relative lyrical lines.

Working Strategy

The researcher, Vechtomova, with other students, developed this technology that involves chord progression, instrumentation, and tempo to write lyrics based on the feelings (emotions) expressed in the music.

Its neural network learns from the live theme, stylistic devices, and different words, explaining different emotions in the music. The system continuously receives the raw audio clips, and the neural network processes them and generates lines in real-time. Musicians then use these lines to write their songs.

Its developer explained:

“The purpose of the system is not to write a song for the artist. Instead, we want to help artists realize their creativity. The system generates poetic lines with new metaphors and expressions, potentially leading the artists in creative directions that they haven't explored before.”

Benefits: Its developers conducted a user study where musicians played several tones using this system, and they found it the ultimate way to complete writing a song earlier than ever before as it's uninterrupted.

3.2. Soft Robotic Hand That Can Play Nintendo

The excellent researchers at the University of Maryland printed a 3D soft robotic hand with enough agility to play “Nintendo’s Super Mario Bros” and win.

Working Strategy

The researchers desired to create something unusual in the soft robotic field with high flexibility, inflatable robots powered by air or water-saving electricity. They developed an integrated fluid circuit allowing the hand to respond to single control pressure. The robotic hand can press the buttons in hand based on the pressure applied (low/medium/high). It has the efficiency of completing the first level of the game in time less than 90 seconds.

Benefits: The inherent adaptability and safety resulted in its use in biomedical devices as prosthetics. However, handling fluid circuits in soft robots is complex in a single step. This strategy is also open source and can be accessed and modified by anyone.

3.3. Social Media Sarcasm Detector

The development of a social media sarcasm detector is done by computer science researchers at the University of Central Florida.

Working Strategy

The team tried to find a way of recognizing emotions in texts, either negative, positive, ethical, or unethical. The AI system is associated with logical data analysis and identifying emotional communication correctly. They taught the computer model to detect patterns showing sarcasm and then connect it with the programs to identify sarcasm based on a queue of words if they appeared together. They fed a significant amount of data and checked accuracy before introducing it. Assistant Professor said:

“Sarcasm isn’t always easy to identify in conversation, so you can imagine it’s pretty challenging for a computer program to do it and do it well.”

Benefits: It is an incredible innovation as social media is the largest used platform to communicate. It is labor-intensive and time-consuming to understand the emotions behind queries and respond to them rightly with traditional or manual systems. Technology is here now!

4. ROBOTICS

4.1. First Self-Powered Liquid Robot that Run Without Electricity

Robots are more than for entertaining humans; robots outperform repetitive tasks in industries and labs. But before sending it to the workstation, it needs energy like humans given in electricity or battery form. In history, scientists strived hard to develop a method that eliminated charging needs, but the success was written in favor of researchers at Berkley Lab and the University of Massachusetts. They have developed a "water-walking liquid robot" capable of diving into the water, getting precious chemicals back, and then coming to the surface and delivering them repeatedly.

Working Strategy

Liqui-bots, like little sacks, is only 2 millimeters in diameter are denser than solutions that have clustered in the mid of the solution and pick up the desired chemicals. They generate oxygen bubbles like little balloons that provide enough power to take them to the surface. These bots move like a pendulum back and forth and run till there is “food (chemicals)” in the solution.

Benefits: The technology produced the first self-powered liquid robot that works on the "buoyancy" principle and operates autonomously. This type of bot can detect several gas types in the environment. This autonomous robotic system can also screen little laboratory samples for clinical use, drug discovery, or synthesis processes.

4.2. Light-Powered Soft Robots Removing Oil Spills from Remote Areas

UC Riverside developed a floating robotic (Neusbot) film designed to remove contaminants, including oil spills from water (seas in broader view).

Working Strategy

This robotic film is fueled on water and powered by light to clean remote areas where it is difficult to recharge. Their film is made of tri-layers, and the middle one is porous, containing water, copper nanorods, and iron oxide. These nanorods are responsible for converting light to heat to vaporize water and support motion on liquid surfaces. Its bottom layer is hydrophobic (water-repelling), so if a heavy wave overpowers the film, it appears on the surface again without damage due to high salt concentrations.

They change the angle of light to control the Neusbot direction of motion.

The chemist, Zhiwei Li at UCR said:

“Our motivation was to make soft robots sustainable and able to adapt on their own to changes in the environment. This machine is sustainable if sunlight is used for power and won't require additional energy sources. The film is also re-usable.”

Researchers developed this Neusbot film after neustons (an animal category including water striders). These insects navigate on water surfaces in lakes or slow-moving streams in a pulse-like movement. Scientists developed Neusbot on the same principle that it can move on the water in a similar pattern.

Benefits: This robotic motion is an excellent way to control and allow motion whenever required. Traditionally, people clean oil from ships by hand, but this technology will work like a vacuum robot that does the cleaning process, even staying at the surface. The researchers are working to make it four-layered to absorb oil or other chemicals.

4.3. First Living Robot That Can Reproduce: Xenobot

A new way to reproduce biologically is invented by scientists- living robots with the ability to self-replicate. These computer-designed organisms are made from frog cells; scientists collected single cells in Pac-Man's "mouth" shape. Their baby robots, "Xenobots," are identical to their parents' looks and movements. A similar process is repeated with the offspring as they find cells and start producing copies, and it goes on.

Working Strategy

Joshua Bongard, a computer science expert at the University of Vermont, said:

“With the right design, they will spontaneously replicate.”

In a Xenopus laevis frog, these embryonic cells develop into the skin, but the scientists have different plans. Levin explained:

“They would be sitting on the outside of a tadpole, keeping out pathogens and redistributing mucus. But we’re putting them into a novel context. We’re giving them a chance to reimagine their multicellularity.”

A xenobot forms a sphere as it is made of about 3000 cells, and after it, the system dies. It is very complex to keep the system reproducing.

Benefits: This research helped get an unaltered and full frog genome. Also, it gives birth to the department of regenerative medicine.

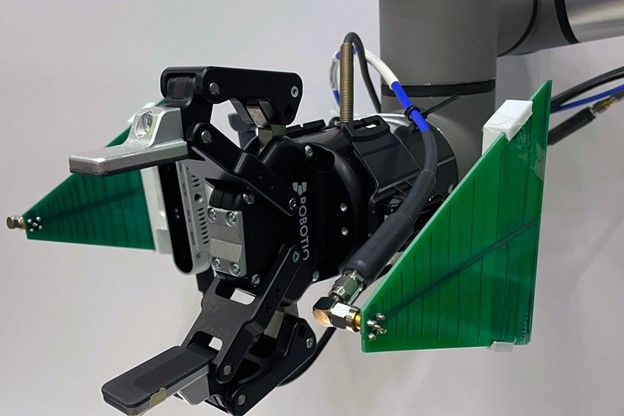

4.4. RFusion: Robots that Finds Lost Items

MIT researchers have developed a robotic arm system, RFusion, with a radio frequency antenna at its gripper and a camera. It combines the antenna's signals with the camera's visual input to locate a lost item even if that product is far away from sight on buried under layers.

Working Strategy

This developed system relies on RFID tags (without battery requiring cheap tags). These tags stuck to that specific item and reflected the antenna's signals. The efficiency of RF signals is that they can travel to most surfaces, including laundry hiding keys; the system locates the tagged item within a mound.

Benefits: It not only involves finding keys at home but also fulfilling major tasks in a warehouse. Further, detecting and installing relevant items in an auto-manufacturing machine and helping the elderly perform routine work are this system's future potentials.

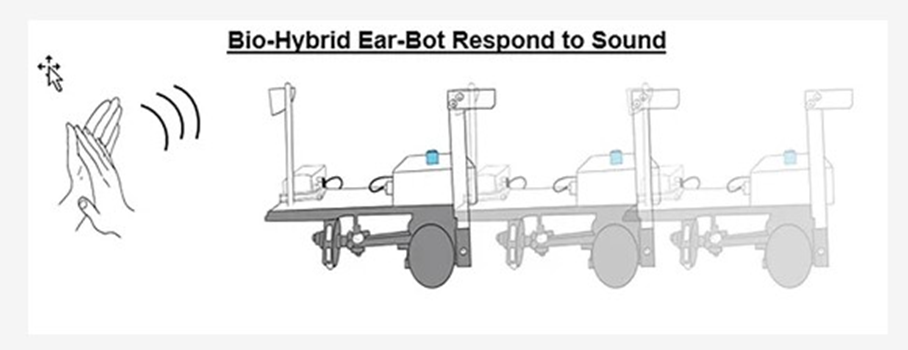

4.5. Unique Robot Able to Hear from a Dead Locust Ear

Tel Aviv University's scientists connected the ear of a locust (dead) with a robot successfully that can respond to the ear's electrical signals correctly. The results were incredible as when they clapped in the locust's ear; the robot started movingforward. Moreover, it showed backward movement in response to the double-clap.

Working Strategy

The researchers aimed to check the benefits of biological systems in improving technological systems. Dr. Maoz explained:

“We chose the sense of hearing because it can be easily compared to existing technologies, unlike the sense of smell, for example, where the challenge is much greater.”

First, they developed a robot that can respond to signals and then isolate the dead locust ear and keep it working. They replaced a robotic electronic microphone with an insect's dead ear that works properly in an artificial system. Then they joined both; the robot detects environmental, electrical signals and with a special chip converting the insect input for the robot.

Benefits: This collaboration of biological systems with technology opens ways for sensory integration between insects and robots. The system is developed for listening and can be modified for vision, smell, and touch purposes. For instance, joining animal capabilities with robots will surely offer better ways to detect criminals, preserve human life, and more.

5. ALGORITHM

5.1. AI Spotting Unseen Signs of Heart Failure

Mount Sinai researchers have developed an algorithm to identify indirect alterations in ECG (electrocardiogram) to predict heart failure in a patient.

Working Strategy

The researchers programmed a computer to train on patient ECG with extracted report data. It summarizes the results based on ECGs taken from the same individuals. The written reports are considered a standard dataset, and natural language processing helped data extraction from written reports. The computer compares them with the cardiogram information to learn spotting weaker hearts. However, the pumping heart strength is recognized by neural networks that efficiently understand image patterns. Dr. Vaid said:

“We wanted to push state of the art by developing AI capable of understanding the entire heart easily and inexpensively.”

They trained the computer by taking data from four hospitals, and fifth healthcare was used t0 test the algorithm efficiency. The computer read over 700,000 echocardiograms and electrocardiograms from Mount Sinai Health System patients from 2003 to 2020.

Benefits: It was 94% accurate at predicting “healthy ejection fraction patient” and 87% correct at who had it before below 40%. However, it is not much effective at predicting patients with weaker hearts.

5.2. Evolving-to-Learn (E2L)/ Becoming Adaptive Approach: Algorithms Mimicking Biological Evolution

Researchers at the University of Bern developed a new approach known as an evolutionary algorithm. It mimics the phenomenon of “natural selection” to find solutions for problems.

Working Strategy

The developed approach is known as "E2L (Evolving-to-learn)" or "becoming-adaptive." The first part asked the computer to detect a repetitive pattern in a continuous wave of input without getting feedback on its performance. In the second part, the computer is asked to behave specifically and rewarded virtually in response. In the third part, "guided learning," they showed the computer the rate of its deviation from desired results. In this way, the algorithm found a "synaptic plasticity mechanism" and solved a new task incredibly.

Benefits: The system mimics natural solutions in sorting out real-world issues. For instance, the “survival of fittest” is nature’s rule as only that organism survive who adapt its environment. Algorithms describe a candidate's fitness in a specific situation, like help player selection and patient's recovery chances.

5.3. Without GPS New Algorithm Help Autonomous Vehicles to Recognize even Changing Seasons

A new algorithm developed at Caltech allows autonomous systems to locate themselves just by looking at their surroundings.

Working Strategy

The researchers used deep learning and artificial intelligence for removing seasonal effects from the present VTRN (visual terrain-relative navigation) system. The satellite and the vehicle picture must be identical to work in the given study. They also tried to manage changes in weather with the Instagram filter that alters the image's shade. Their self-supervised technique uses AI to check patterns in the images that humans might ignore.

Benefits: The old VTRN system introduced their new algorithm, and efficiency was enhanced to 92% in navigation. The team is hopeful that their work will be used by driverless cars for improving navigation systems.

6. TRANSPORT

6.1. New Early Warning System for Self-Driving Cars

Researchers at TUM (Technical University of Munich) developed a new AI-based vehicle warning system. It learns from many traffic solutions humans face in real life while diving on roads.

Working Strategy

The system comes with cameras and sensors to watch the surrounding environment and record vehicle data, including steering angle, weather condition, road condition, speed, and visibility. They trained an AI system on RNN (recurrent neural network) for pattern recognition within data. It responds when finding a pattern that was not handled by the system in the past; it now warns the driver to be aware of any critical situation.

Benefits: It is revealed from the current study that this system, when applied to BMW, can warn any unusual activity before seven seconds of its happening with an accuracy of about 85%.

7. SPACE

7.1. Method Developed for Nuclear Explosion Detection

Researchers at UAF invented a method by using “single-microphone infrared monitors” to improve the detection process of explosions, including nuclear eruptions.

Working Strategy

With the library, they detected explosions with noise via single-channel microphones that record IR-sounds at the level below humans can hear wave frequencies. Present detecting systems use multiple microphones; that's an expensive approach and challenging to maintain. However, a single system enhances detection capability. They trained the artificial system on synthetic explosion sounds to recognize real ones.

Benefits: The developed system efficiently detects huge explosions, including chemical and nuclear. It can help organizations to detect explosions from over 100kms.

8. AGRICULTURE

8.1. World’s Smallest Fruit Picker Controlled by AI

Physicists from DTU have achieved a technological breakthrough inspired by the insect’s ability to suck nutrients from plant cells. They aimed to reduce the “harvesting, transporting, and processing” need. They found that it is feasible to extract essential chemicals “plant metabolites” from the plant cells, which will ultimately end up mechanical and chemical processing.

Working Strategy

The harvester tip is about 10micorns, and it searches for chemicals in the leaves and fruit of 100microns. This technology is developed with pre-existing GoogLeNet neural networks and machine learning. The system is trained to recognize images. Magnus explained:

“We used a transfer learning technique, where you use the existing neural network’s ability to recognize different objects in an image. By showing the computer several new images with the manually marked cells, we succeeded in adjusting the network's parameters, so it recognizes the microscopic metabolite-rich cells.”

In this way, the harvester uses its camera to take leaf picture, run it via software and recognize the cells to be harvested. Also, it uses a microrobot to extract chemicals automatically, saving the rest of the plant from any damage.

Benefits: It is expected that this approach will give rise to a new biomass source in the sustainable energy production department. Further, it will help extract huge amounts of biofuel from trees.

9. VIRTUAL REALITY

9.1. Virtual River Cruise Game: Specifically Designed to Reduce Dress Changing Pain in Burn Patients

Brun pain itself is more hurting than an injury, and it is enhanced significantly during dress changing. Henry Xiang and his colleagues developed a VR game to help pediatric burn patients to reduce their pain during dress changing.

Working Strategy

The researchers divided 90 children’s patients into three treatment subgroups aged six to seventeen: standard care, active, and passive VR.

“Two factors were considered for the game’s design. First was snow, the cooling factor within the game. The second factor was cognitive processing to encourage active engagement.”

Burned patents played the game on their smartphones with headsets during dress changing for five to six minutes; patients engaged in the game actively in the active VR group (don’t move while playing it) while passive group patients just watched the game.

Benefits: The game is clinically helpful in outpatient care. Smartphones are feasibly in approach and known by most patients.

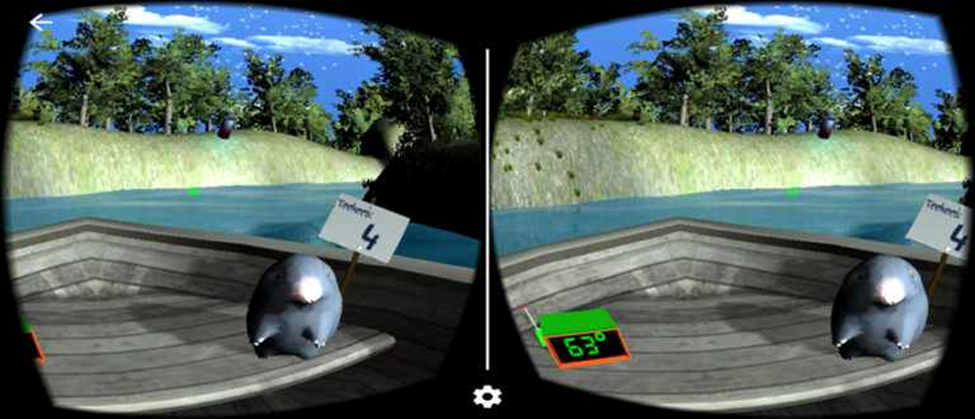

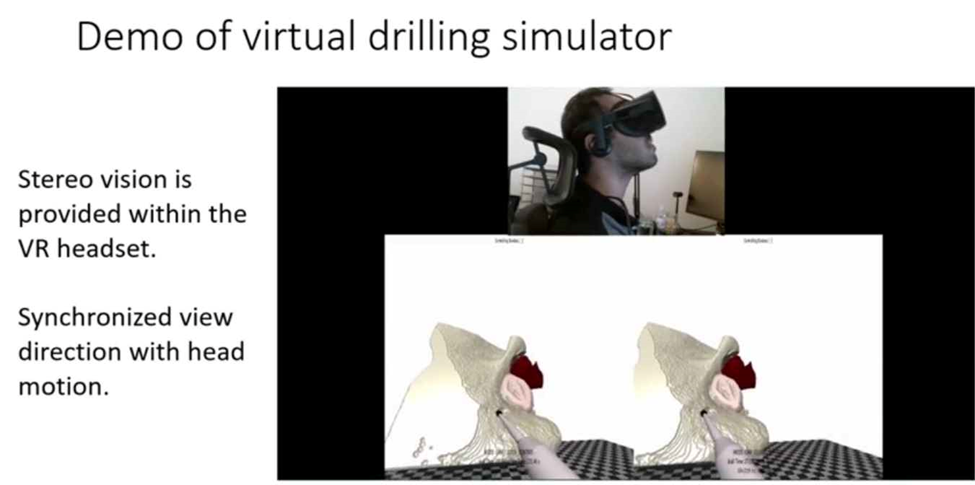

9.2. VR Simulator Training Surgeons for Skull-Base Operations

Researchers at JHU (Johns Hopkins University) developed a new technique to train doctors to complete complex surgery processes with VR technology.

Working Strategy

The developed system allows surgeons to train their skills in a simulated environment based on real-time CT scans (computer tomography). It can also store structural data and help train machine learning algorithms for surgeons' assistance. Munawar explained:

“The data is beneficial for two purposes. Firstly, it could be used to train artificial intelligence algorithms that can assist surgeons in the operating room and make procedures safer. Secondly, by comparing surgical data from the residents in training and expert surgeons, educators could individualize training and make the limited time trainees have for education more efficient.”

Benefits This developed system will be introduced in healthcare, hospitals, and medical colleges to train newbies in the surgery department to train their skills before operating humans. Also, it opens new ways to integrate VR-based training tools with structural data to train AI algorithms.

10. SECURITY

10.1. WE-FORGE: Cybersecurity Build a Better Canary Trap

It is a new tool developed at Dartmouth College using an artificial intelligence system to produce fake documents for fooling theft IPs. The "canary trap" strategy spreads several versions of the wrong document to hide the secret. Governments and organizations mostly use this process to save their data from attacks and being unrevealed.

Working Strategy

WE-FORGE improves existing techniques developed by security experts by using NLP to generate multiple fake documents automatically. When a system is hacked, the hackers face the daunting task of figuring out which one is the right document from many similar docs.

Its algorithm works by computing similarities in the concepts of content and analyzing the relevancy of words with that specific document. It stores concepts in “bins” and, for each group, computes the feasible individual.

Benefits: It reduced time for creating concept guides regarding specific technologies. Plus, it gives a diversity of false information as it is more than a simple information concealer.

11. NANOTECH

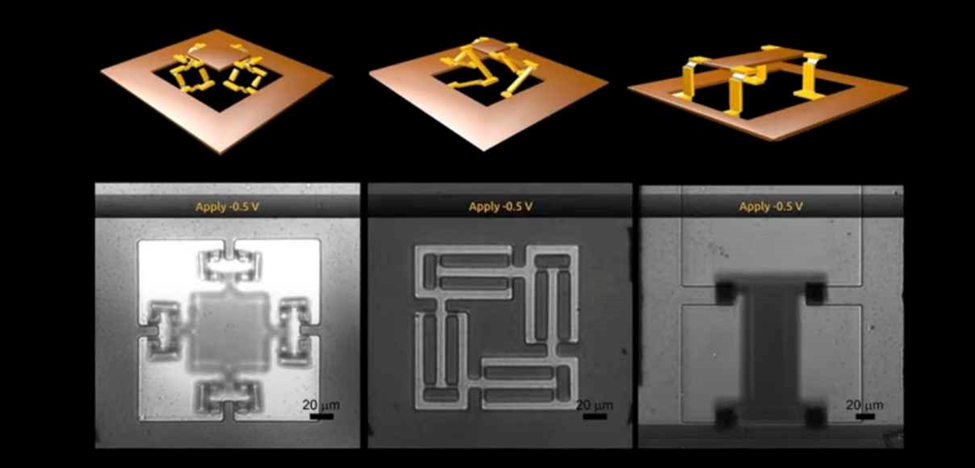

11.1. World’s Smallest Origami Bird Created by Self-Folding Nanotech

Researchers from Cornell University have developed a micro-sized memory actuator that can fold 2D materials to 3D, but it must be thin atomically. It just needs a sudden jolt of voltage. Interestingly, the material keeps its shape once bent even when the voltage is removed.

Working Strategy

The device comprises a thin platinum layer (nanometer) with titanium dioxide/titanium film. Above these layers is covered with several layers of solid silicon dioxide panels. Oxygen atoms are attracted towards platinum replacing platinum atoms in response to positive voltage applied to actuators. It is known as "Oxidation," which causes the expansion of platinum atoms on one side between inert glass panels, responsible for shape bending.

In response to a negative voltage, platinum restored its state, and by changing glass panel pattern, an origami-like structure is observed actuated by mountain folds.

They desired to make tiny creatures with brains, so the appendages are driven by CMOS (complementary metal-oxide-semiconductor) transistor. Their vision was to create millions of micron-sized robots bursting from a wafer with the ability of "folding themselves," moving free, and doing tasks. McEuen stated that:

“But what we haven’t learned how to do is build machines at tiny scales. And this is a step in that basic, fundamental evolution in what humans can do, of learning how to construct machines that are as small as cells.”

It is complex for them to make material responding to CMOS circuit, and it is achieved by the University's researchers, making a memory actuator with voltage driving power and holding a bent shape. They also made Guinness World Record in making the smallest robot.

Benefits: Only once is the voltage required to bend them for life. The machines can fold themselves in a pulsive time of 100 milliseconds. The process of flattening and refolding can be done several times. These robots will be used to remove bacteria from living tissues transforming manufacturing. Plus, this technology is also helping make foldable robot legs and thin sheet-like robots.

Full list of all significant Artificial Intelligence achievements in the history till date